Zombie Sites and Lost Resources: What to do in the Face of the Data Deluge?

Written by Guy Plunkett III, PhD in Bacteriology

As a bacteriologist and genomics researcher at the University of Wisconsin, and in my current role as Senior Scientist at DNASTAR, data has always played a central role in successful scientific pursuits. I’ve been in the research game long enough to remember having to travel to another institution’s library in order to access a particular journal, or repeatedly badgering other researchers for data that they had to copy by hand or send as photographs. Now in this modern Age of Google, we take it for granted that literature and data will be available online, including decades worth of digitized back issues. Some journals exist in digital form only; even the venerable Proceedings of the National Academy of Science has announced it will stop publishing its print edition in 2019.

Online supplemental data allows the tyranny of page limits to be bypassed, and now large data sets and sequence databases abound.

But behind the screen of this cornucopia, even as we sometimes feel we are drowning in data, there lurks the specter of impermanence. Unlike the printed page, digital data can disappear. People move on, retire, or die; journals cease to publish; funding is lost and sites shut down. Literature, protocols, software code, images, database schema & content are lost forever.

Zombie Sites and Disappearing Data

A zombie site is defined as a website that suffers from a chronic failure to update its contents. In this particular context I am not referring to sites that look and feel the same as they did when first launched 20 years ago — data is data, after all — but rather ones that have not had their content updated in years. And even old data has some value, especially when it represents the efforts of curatorial parties; far worse is the related issue of lost resources — sites that no longer exist at all, or those where a domain name was allowed to die and was then resurrected for some other unrelated purpose. At best an URL is relegated to landing page status or a redirect page. You might still be able to find the information you seek. But in other cases you are faced with a variation of “404 Not Found.”

RIP Scientific Databases

Some examples follow. These are not meant to demean anyone’s work, but rather to bemoan its loss.

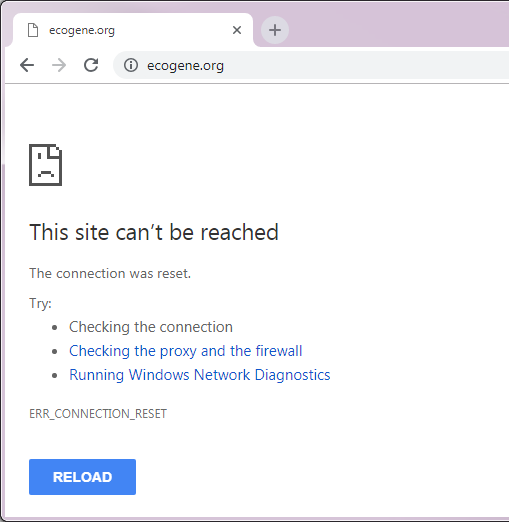

- EcoGene

- This database evolved from pre-genome era studies dating back to the early 1990s, and by 2000 its online incarnation had become one of the preeminent resources of information regarding the genome sequence of Escherichia coli K-12. In 2007 EcoGene became the source of annotation updates for the GenBank and RefSeq entries, coordinating a multi-database collaboration. The database underwent a wholesale upgrade and expansion in 2013. But EcoGene was largely the work of a single individual, Dr. Kenneth Rudd; funding became an issue and updates were sporadic by the end of 2017. Dr. Rudd retired, and an examination of the Internet Archive’s Wayback Machine suggests EcoGene was last available around March 2018. Current attempts to access the site consistently time out, and this prominant resource is no longer available.

- The Comprehensive Microbial Resource (CMR)

- Originally from The Institute for Genomic Research (TIGR) in 2001, and subsequently from the J. Craig Venter Institute (JCVI), this database combined information from all complete and publicly available bacterial and archaeal genomes. The CMR contained automated structural and functional annotation across all those genomes to provide consistent data types necessary for effective mining of genomic data. While some of its functionality persists — JCVI’s TIGRFAM protein family models, for example, the database itself was shut down by 2014.

- Big Picture Book of Viruses

- Established in 1995, this site was intended to serve as both a catalog of virus pictures on the Internet and as an educational resource to those seeking more information about viruses. As recently as August of this year it was profiled in a “Best of the Web” column by Genetic Engineering & Biotechnology News. The site does remain live, but it was last updated in 2007. Some links are broken, and none of the emails listed for contacts or to submit new information seem to be viable. It is a classic zombie site.

- Lost supplemental materials

- In 2003, Dr. Svetlana Gerdes and colleagues published a genome-wide assessment of essential genes in Escherichia coli MG1655. At that time journals did not routinely host supplementary material on their own servers, and the large data tables were made available on three different author-arranged sites. But by 2014 all of those links were no longer active, having suffered the consequences of site death as individuals retired or moved on. Dr. Gerdes reached out to me, and since then I have been hosting a full copy of the Supplemental Data at UW-Madison. There was still the issue of discoverability, and after urging the original journal, they eventually added a pointer to the new site to the online version of the paper as a “test case” for this type of situation.

- In this last example there was at least a resolution that brought the data back to availability. But this is certainly not the first or the last case of this issue to arise.

Preserving Data: What can be done?

Many journals have standing policies requiring DNA sequences to be deposited with the International Nucleotide Sequence Database Collaboration before a paper can be accepted. The government-funded partner organizations that comprise the INSDC — the DNA DataBank of Japan (DDBJ), the European Nucleotide Archive (ENA) at EMBL-EBI, and GenBank at NCBI — collaborate such that data submitted to any of them are shared among all three. Similarly, researchers can deposit raw DNA sequencing data and alignment information to the Sequence Read Archives (SRA), and gene expression data (sequence or microarray based) to the Gene Expression Omnibus (GEO).

Building on those sequence-oriented examples, other public data archives have been developed. In addition to discipline-specific sites, there are generalist repositories like the Dryad Digital Repository. As researchers, publishers, and funding agencies recognize the value of underlying data as a product of research, mandated public data archiving is becoming a given in many fields. Journals in a variety of disciplines have adopted a Joint Data Archiving Policy (JDAP), in which the journal “requires, as a condition for publication, that data supporting the results in the paper should be archived in an appropriate public archive.” Examples of recommended repositories are available on these pages from Nucleic Acids Research and Springer Nature.

DNASTAR Data Tricks and Treats

If you can’t tell, at DNASTAR we’re enthralled with data and understand the need for researchers to have easy data solutions. Our Lasergene Bioinformatics Software Suite is fully integrated with databases like PDB and GenBank to allow you to download the data you need, right to your project. Whatever data source you use, Lasergene also provides tools for analysis and annotation of a wide variety of file types.

Other spooktacular data tricks Lasergene can provide:

- SANGER SEQUENCE ASSEMBLY

- Validate NGS data with Sanger reads

- INTEGRATED DATA ANALYSIS AND VISUALIZATION

- Visualization and analysis of genomic variation, gene expression and gene regulation data in a single project

- IMPORT YOUR OWN DATA

- DNASTAR Lasergene applications can import and export a wide variety of file types. Find out what files you can import here.

Most Commented Posts